| . |  |

. |

| . |  |

. |

|

by Staff Writers Stanford CA (SPX) Oct 29, 2018

In the basement of the Gates Computer Science Building at Stanford University, a screen attached to a red robotic arm lights up. A pair of cartoon eyes blinks. "Meet Bender," says Ajay Mandlekar, PhD student in electrical engineering. Bender is one of the robot arms that a team of Stanford researchers is using to test two frameworks that, together, could make it faster and easier to teach robots basic skills. The RoboTurk framework allows people to direct the robot arms in real time with a smartphone and a browser by showing the robot how to carry out tasks like picking up objects. SURREAL speeds the learning process by running multiple experiences at once, essentially allowing the robots to learn from many experiences simultaneously. "With RoboTurk and SURREAL, we can push the boundary of what robots can do by combining lots of data collected by humans and coupling that with large-scale reinforcement learning," said Mandlekar, a member of the team that developed the frameworks. The group will be presenting RoboTurk and SURREAL Oct. 29 at the conference on robot learning in Zurich, Switzerland.

Humans teaching robots To humans, the task seems ridiculously easy. But for the robots of today, it's quite difficult. Robots typically learn by interacting with and exploring their environment - which usually results in lots of random arm waving - or from large datasets. Neither of these is as efficient as getting some human help. In the same way that parents teach their children to brush their teeth by guiding their hands, people can demonstrate to robots how to do specific tasks. However, those lessons aren't always perfect. When Zhu pressed hard on his phone screen and the robot released its grip, the wooden steak hit the edge of the bin and clattered onto the table. "Humans are by no means optimal at this," Mandlekar said, "but this experience is still integral for the robots."

Faster learning in parallel "With SURREAL, we want to accelerate this process of interacting with the environment," said Linxi Fan, a PhD student in computer science and a member of the team. These frameworks drastically increase the amount of data for the robots to learn from. "The twin frameworks combined can provide a mechanism for AI-assisted human performance of tasks where we can bring humans away from dangerous environments while still retaining a similar level of task execution proficiency," said postdoctoral fellow Animesh Garg, a member of the team that developed the frameworks. The team envisions that robots will be an integral part of everyday life in the future: helping with household chores, performing repetitive assembly tasks in manufacturing or completing dangerous tasks that may pose a threat to humans. "You shouldn't have to tell the robot to twist its arm 20 degrees and inch forward 10 centimeters," said Zhu. "You want to be able to tell the robot to go to the kitchen and get an apple."

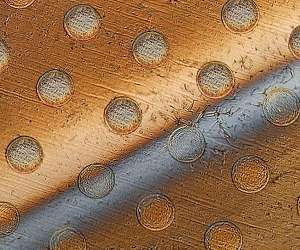

How to mass produce cell-sized robots Boston MA (SPX) Oct 24, 2018 Tiny robots no bigger than a cell could be mass-produced using a new method developed by researchers at MIT. The microscopic devices, which the team calls "syncells" (short for synthetic cells), might eventually be used to monitor conditions inside an oil or gas pipeline, or to search out disease while floating through the bloodstream. The key to making such tiny devices in large quantities lies in a method the team developed for controlling the natural fracturing process of atomically-thin, britt ... read more

|

|||||||||||||

| The content herein, unless otherwise known to be public domain, are Copyright 1995-2026 - SpaceDaily. All websites are published in Australia and are solely subject to Australian law and governed by Fair Use principals for news reporting and research purposes. By using our websites you consent to cookie based advertising. If you do not agree with this then you must stop using the websites from May 25, 2018. Privacy Statement. Additional information can be found here at About Us. |